The regulation of artificial intelligence ethics is now a “live political issue”, with several heavyweight players calling for urgent action on the matter.

With $30 million in funding in this year’s federal budget and a growing push from the Opposition for legislated regulations, it is likely to become a major issue in the lead up to the next federal election.

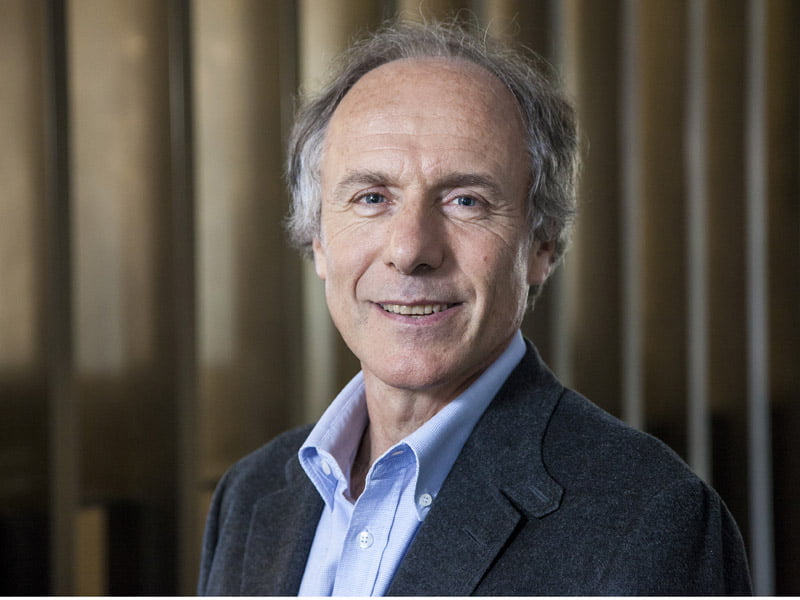

Australia’s Chief Scientist Dr Alan Finkel has urged all political parties to get on the front foot and address the myriad issues that artificial intelligence is creating.

“Artificial intelligence is coming on like a freight train. We will look back on 2018 as the year that artificial intelligence really crashed into public awareness, triggering both excitement and concern. I welcome that conversation,” Dr Finkel told InnovationAus.com.

The Transport Workers Union raised the issue at the recent NSW Labor Conference, while its federal counterparts are believed to be drafting a similar policy to be debated at the national conference at the end of the year.

The federal government provided $30 million in this year’s budget for the creation of an AI roadmap and national AI ethics framework.

Across the ditch, NZ is urgently developing an AI action plan and ethical framework, while Germany became the first country late last year to legislate a series of ethical rules for autonomous vehicles.

In Australia, the issue is currently centred on how artificial intelligence could be regulated to ensure it is ethical, with a particular emphasis on driverless cars.

In May this year, Dr Finkel floated the idea of a “Turing stamp” for artificial intelligence providers, similar to the Fairtrade logo on coffee.

Application for the stamp would be voluntary but the assessment would be conducted by a third party under Dr Finkel’s proposal.

But the Chief Scientist’s proposal was slammed by Transport Workers Union national secretary Tony Sheldon, who said the voluntary certification process is “miles off the protections we need to keep people safe and alive in Australia”.

“Voluntary certification fails to provide the regulation necessary to protect and fails to recognise the inability of a machine to predict human behaviour and make humane ethical decisions,” Mr Sheldon said.

But Dr Finkel said his proposal is meant to complement existing regulations addressing applications of AI like medical devices and autonomous vehicles.

“I am not proposing the Turing Certificate as a substitute for these systems.

“Rather, it would be a complement intended to aid consumers to put their purchasing power behind companies who clear the high bar for ethics – just as the voluntary FairTrade mark allows us to communicate our values to the suppliers of chocolate and coffee,” Dr Finkel said.

“Specifically, this voluntary certificate system is intended to cover applications of artificial intelligence software such as social media sites and digital home assistants that do not affect health and safety.

“The voluntary certificate system would be established through a community consultation process and each company and product would be independently audited.”

Dr Finkel said that existing regulations will need to quickly be “expanded and strengthened” to cover the ethical decisions that will need to be made by driverless vehicles.

“Individual companies should use ethical approaches in the development of all their software.

“But they must be held to external standards for the behaviour of artificial intelligence software where that software affects our wellbeing,” he said.

Mr Sheldon said now is the time for political parties to address the issue, with a failure to regulate the space leading to potentially catastrophic social and ethical problems, including job losses, wage polarisation and accidents.

“I think it is absolutely a live political issue.” he said.

“It is the first time that a party, to my knowledge, has adopted a formal position on AI, particularly regarding the ethical use of AI. Economics is driving the push for artificial intelligence, not voters and not the community.

“There is no input into the introduction of this technology on to our roads and into our homes which is taking ethical or social issues into account. We need a debate on this issue and we need regulation,” he said.

The TWU is likely to push for formal legislation at the Labor national conference. The shadow minister for the digital economy Ed Husic has also emphasised the importance of the government getting on the front foot in terms of AI and ethics.

“On the world stage, Australia has a trusted voice – and that’s why I think we should put that voice to use, getting nations to think about the way AI is developed and applied.

“Considering the varied applications of AI and robotics – which are only going to become more and more integral to how we live and our work – we need to work on a set of common ethics and standards,” Mr Husic said late last year.

Ethical regulations for artificial intelligence can’t be left to the tech giants, Mr Sheldon said.

“Sand-shoe wearing billionaire entrepreneurs who are driven by profits are in no position to make up ethical rules that permit a machine to decide who dies in a car crash.

“Technology, however advanced, will not be able to consider all the variables and make conscious ethical decisions in the same way a human would,” he said.

Do you know more? Contact James Riley via Email.